Secure Static Website Deployment

We are excited to launch our new website this week! We decided to rewrite the whole site entirely, along with designing a new look for it. Then came the challenge of incorporating what had been a separate blog. In the end, we went with what we feel is a very secure setup that we’d like to share with others looking to accomplish something similar.

TL;DR

We moved everything over from an old PHP main site on one server and a separate Ghost CMS blog on another server into one cohesive site using a combination of Static Site Generators (SSG) and Serverless Architecture.

The site's attack surface is much lower, the speed is much quicker, and the cost is much lower - WIN!

The problem with traditional website hosting

What could be worse than a security company’s website getting hacked? We’re busy trying to improve other companies’ security postures, so we’d rather not spend time patching, monitoring, and updating our own servers. For that matter, I’m sure many other companies would prefer to focus on their core vision than worry about web server security.

For most, the decisions lie primarily between a few main options:

- Managed CMS (WP Engine, SiteGround, Bluehost, etc)

- Self-hosted CMS (Wordpress, Ghost, Drupal, etc)

- Manged Website Builders (Squarespace, Wix, Medium, etc)

- Self-hosted custom sites (PHP, .Net, etc)

There are many reasons why someone would want to go with one of these services, but there are also some potentially significant downsides. You can find the pro’s of these services directly from the provider’s marketing pages, so I’ll focus here on why these options weren’t good for Fracture Labs. Many of these issues could be mitigated with the right design upfront and proper on-going care, but again, the point here is to eliminate as many attack paths as possible so we don’t have to even worry about what could happen.

Issues with most website options

- Cost - whether you’re paying for managed hosting, or if you are hosting it yourself and need to spend time and money maintaining it, the costs can add up quickly.

- Control - there are plug-ins to handle almost any need, but you’re still limited by what the software allows you. You might have more-specific needs that just can’t be met by a rigid CMS.

- Administration - These systems are administered directly on the web server, which means that anyone that can gain access to the system can completely take it over to steal data, server malware, or attack other servers as part of a botnet.

- Security Maintenance - Making sure your servers are routinely and timely patched can take a lot of effort. The same goes for ensuring only secure code makes it through publishing. And dealing with third party plug-ins? How often do most companies go back and check if their old plug-ins have any known vulnerabilities?

Goals for a low-maintenance, secure website

Well, I guess the goals are pretty much the inverse of the issues I just mentioned!

- Low cost, but fast

- High-degree of control

- Easy to administer, but difficult for an attacker to take control

- As close to set-it-and-forget-it as possible

Our solution

We now have a fully server-less site that provides all of the functionality we’re looking for at blazing fast speeds, at low cost, and with a minimal attack surface! Boom!

Static Site Generators (SSG)

It’s great to have a dynamic website, especially for blogging. But the nature of dynamic websites provides a large attack surface for attackers. Whether the risks are introduced by poor coding, out of date plug-ins, or brute-force attacks against authentication modules, the dynamic capability introduces more risks than necessary if you’re able to take advantage of SSG’s.

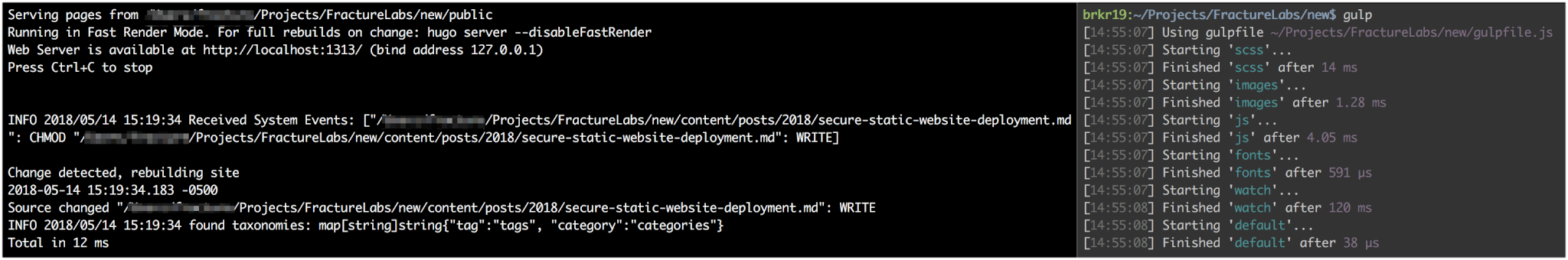

In our case, we had to ramp up on some of the newfangled DevOps tools, but it was well worth it. We’re now building the static site using a combination of Gulp for our SCSS and JS files, and Hugo for our templating and dynamic-to-static conversion. A big shout-out goes to @CaseyCammilleri from Sprocket Security for teaching me about the SSG concept during one of our chats.

So this all means we get to write the site content with Markdown syntax in

Sublime, while Gulp and Hugo watch for changes, compile in real-time, and provide a smoking fast local development environment for us to test against before publishing.

The gulp script command is simply gulp to build based upon my gulpfile.js configuration.

The Hugo script we use for development (might have some redundant calls in it, but this is what we landed on) is also pretty easy:

rm -rf public/* && hugo && hugo server -Dwv --renderToDisk

What’s great is that our last blog was written in Markdown as well and self-hosted in Ghost. All of the articles moved over flawlessly to this new system, so we didn’t lose anything or have to re-write it all!

So at this point, we’ve eliminated any server-side code execution while maintaining the functionality of a dynamic site!

Serverless Hosting

Ok, let me start by saying I’m not a big fan of the term ‘serverless computing’. I get what the intention is, but obviously there are still servers doing all of the dirty work behind the scenes. I guess it just comes down to: I don’t need to know about nor manage any of the servers that are hosting our site. You could say the same about any managed solution really.

Anyways, now that we had a static site, we figured why maintain a server just for that? We were already running the site on an AWS EC2 server, so we decided to leverage AWS S3 instead. Deploying to AWS is incredibly quick and easy:

rm -rf public/* && hugo && aws s3 sync <src folder> s3://<bucketname> --acl public-read

What about Contact Forms?

Ok, so this is usually the big hang-up with fully-static sites. How can you incorporate a contact form on a static site? Sure, you could use one of the many “Contact Us” form providers (FormKeep, FormSpree, etc), but then you’re trusting them with your customer’s potentially-sensitive requests and might have to pay for it as well.

This is where Function as a Service (FaaS) comes into play. In our case, we continued down the AWS route and chose to implement the contact form with an API Gateway endpoint, a Lambda back-end, and Simple Email Service email sending platform. AWS has some decent security features built-in regarding rate limiting, but we’ve added Captcha to the form as well.

How about SSL/TLS?

I believe you should configure every site to work over SSL/TLS, regardless of whether or not you are accepting sensitive data from visitors. Besides Google treating HTTPS URLs better for search ranking, your visitors can also get the additional level of assurance by verifying the site they are on is really yours.

In order to easily serve our site over HTTPS, we created a Cloudfront distribution for it using a certificate generated by Amazon. No more spending hundreds of dollars on a certificate or messing with the frequent renewals associated with Let’s Encrypt (still a great option for other sites - check them out if you haven’t already).

Where does this leave us?

Well, at this point, with a minimal amount of effort upfront, we now have a secure website running in a serverless architecture. We don’t need to worry about ensuring Apache/Nginx are patched immediately following a vulnerability report, or scanning source code for potential vulnerabilities. We only need to focus on ensuring we’re properly protecting our AWS accounts, access keys, and most importantly, content creation. Our AWS bill is also much lower than what we’d spend on a complicated CMS solution. Not to mention, we know AWS will be able to handle the load when our next hot blog post goes viral!

Let us know what you think

Please share this post if you found it useful and reach out if you have any feedback or questions!

Big Breaks Come From Small Fractures.

You might not know how at-risk your security posture is until somebody breaks in . . . and the consequences of a break in could be big. Don't let small fractures in your security protocols lead to a breach. We'll act like a hacker and confirm where you're most vulnerable. As your adversarial allies, we'll work with you to proactively protect your assets. Schedule a consultation with our Principal Security Consultant to discuss your project goals today.